A satellite photo appears online.

It shows a devastated military base. Burned radar equipment. Destroyed infrastructure.

The image spreads across social networks within minutes. Analysts debate it. Commentators quote it. Politicians reference it.

There is only one problem.

The satellite image never existed.

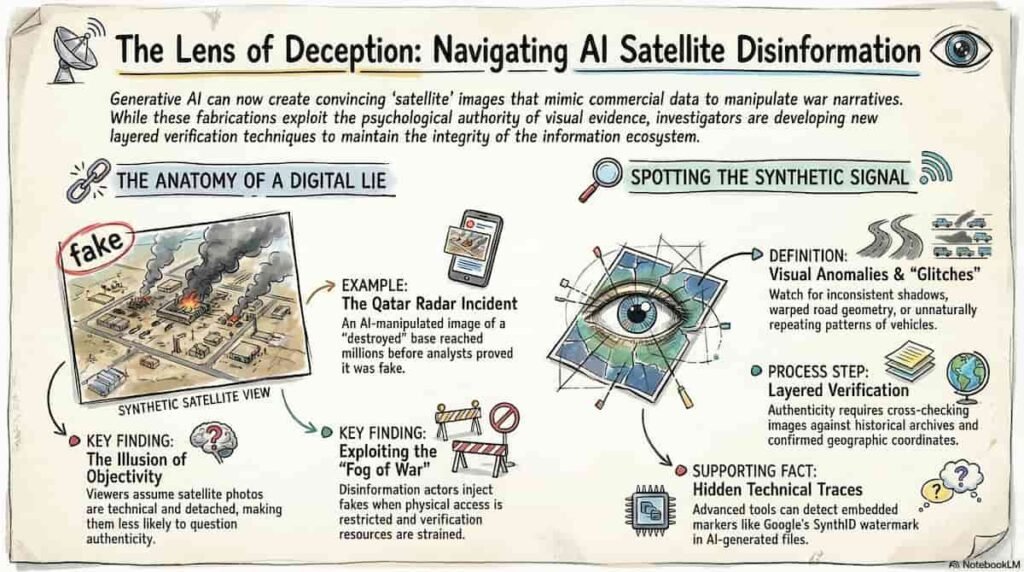

Recent conflicts show how AI satellite imagery disinformation is becoming one of the most effective propaganda tools of the digital age. When synthetic visuals look credible, even experienced observers struggle to separate fact from fabrication.

And the battlefield is no longer just physical territory. It is the information ecosystem.

The Rise of Synthetic Satellite Images

Satellite imagery once carried a reputation for near-scientific credibility. Governments relied on it. Journalists quoted it. OSINT investigators used it to verify claims in real time.

Generative AI has changed that equilibrium.

Image models now create convincing overhead visuals that resemble commercial satellite data. Roads align. shadows look realistic. infrastructure appears coherent.

The result: fabricated battlefield evidence.

During the recent escalation involving the United States, Israel and Iran, a widely shared image claimed to show a destroyed US radar installation in Qatar. The picture circulated through social networks and media commentary before analysts identified a crucial detail.

The “satellite photo” had been generated using AI and manipulated from an older Google Earth image of a different military facility. :contentReference[oaicite:0]{index=0}

The visual spread quickly across several platforms and accumulated millions of views before verification occurred.

In an environment saturated with rapid-fire updates, the correction rarely travels as far as the original image.

Why AI Satellite Imagery Works So Well as Propaganda

Visual evidence has always shaped public perception during conflicts. Photographs carry emotional authority that written claims rarely match.

Synthetic satellite imagery exploits that psychological reflex.

Visual credibility

Satellite photos feel objective. They appear technical and detached from narrative framing.

Viewers assume machines capture them automatically. Few imagine they could be fabricated.

Speed of distribution

Social platforms reward immediacy.

A dramatic visual posted during a breaking event gains momentum before verification begins.

Information fog during conflict

Wars produce fragmented information flows. Access to affected regions may be restricted. Governments impose censorship.

Under those conditions, OSINT communities rely heavily on satellite images.

Disinformation actors understand that dynamic.

They inject synthetic imagery directly into the investigative ecosystem.

The OSINT Community Under Pressure

Open-source intelligence analysts built their reputation by verifying claims using public data: satellite imagery, videos, social posts and geolocation.

AI manipulation complicates that mission.

Researchers tracking recent conflicts noticed a rising volume of altered geospatial imagery circulating online. :contentReference[oaicite:1]{index=1}

Some images contain subtle distortions:

- inconsistent shadows

- repeating vehicles or structures

- impossible viewing angles

- blurred or warped infrastructure

Other fabrications rely on simpler editing techniques. Damage indicators or fire effects are layered on top of legitimate satellite photos.

At first glance, both variants appear plausible.

The challenge for analysts lies in the time pressure. Verification requires careful comparison with previous imagery, geographic data and known infrastructure layouts.

Disinformation spreads much faster than verification.

Imposter OSINT Accounts and the Trust Crisis

Another emerging trend complicates the situation: fake OSINT profiles.

Social networks now host accounts posing as independent investigators. They publish fabricated satellite visuals while adopting the language and style of credible analysts.

The tactic aims to erode trust in the broader OSINT ecosystem.

When viewers struggle to distinguish authentic researchers from impersonators, every piece of analysis becomes suspect.

Information warfare strategists have long targeted media credibility. AI imagery simply accelerates that effort.

The Technical Clues Hidden in Fake Satellite Images

Even sophisticated synthetic visuals leave traces.

Investigators examining manipulated war imagery identified recurring signals that reveal AI generation.

Repeated visual patterns

Generative models sometimes duplicate objects unintentionally. Rows of identical vehicles or buildings appear in positions matching earlier satellite images.

Distorted geographic details

Road geometry, infrastructure alignment or terrain features occasionally break physical logic.

AI watermark signals

Certain images carry hidden watermarks embedded by generative models.

In one widely circulated fake, analysts detected a SynthID watermark, a marker associated with Google AI image generation systems. :contentReference[oaicite:2]{index=2}

Nonsense coordinates

Some fabricated images display random or meaningless geographic coordinates.

These small irregularities often expose manipulated visuals long before official sources respond.

Why Satellite Intelligence Still Matters

Despite the surge in synthetic imagery, real satellite intelligence remains one of the most reliable verification channels available.

Commercial satellite providers capture high-resolution images of conflict zones in near-real time. These datasets allow investigators to compare suspected disinformation against verified imagery.

One example illustrates the value of independent satellite analysis.

Following an attack near Niamey airport in Niger, online posts circulated pictures claiming the civilian terminal was on fire. Satellite intelligence company analysts reviewed their latest imagery and found no damage.

The viral photos were almost certainly AI-generated fabrications. :contentReference[oaicite:3]{index=3}

Without satellite verification, the false narrative could have shaped public perception of the event.

The Strategic Consequences of AI War Imagery

Synthetic satellite images may look like internet curiosities. Their impact reaches far beyond social media.

Public opinion

A convincing visual can alter how audiences interpret military events. Images suggesting successful strikes or catastrophic losses influence narratives quickly.

Political pressure

Governments often face immediate questions after viral imagery appears online. Even false visuals may shape diplomatic debates.

Financial markets

Investors monitor geopolitical signals constantly. Fabricated visuals suggesting infrastructure damage or military escalation can trigger market reactions.

When misinformation spreads faster than verification, the economic consequences become real.

A New Era for Geospatial Verification

The emergence of AI satellite imagery disinformation marks a turning point for OSINT investigators and journalists.

Visual evidence alone no longer guarantees authenticity.

Verification now requires layered analysis:

- cross-checking with historical satellite archives

- comparing infrastructure layouts across multiple providers

- examining metadata and coordinate references

- evaluating visual anomalies in terrain and shadows

Digital investigators already practice these techniques. The difference now lies in scale. AI allows disinformation actors to generate fabricated imagery in large volumes.

The verification process must evolve just as quickly.

The Information Battlefield Is Expanding

Modern wars unfold simultaneously across two arenas.

The physical battlefield determines territorial control.

The information battlefield shapes perception.

Synthetic satellite imagery sits at the intersection of both. A single fabricated visual can travel across continents before analysts verify its authenticity.

For journalists, OSINT researchers and policymakers, the lesson is clear:

visual evidence must always face scrutiny.

Even when the image looks like it came from space.

Want to Learn More?

If you follow OSINT, digital investigations or AI-driven disinformation, this topic will only grow more relevant.

Join the community exploring these challenges:

Newsletter

https://projectosint.substack.com/

Telegram

https://t.me/osintaipertutti

https://t.me/osintprojectgroup

Because the next viral satellite image might not come from orbit.

It might come from an algorithm.