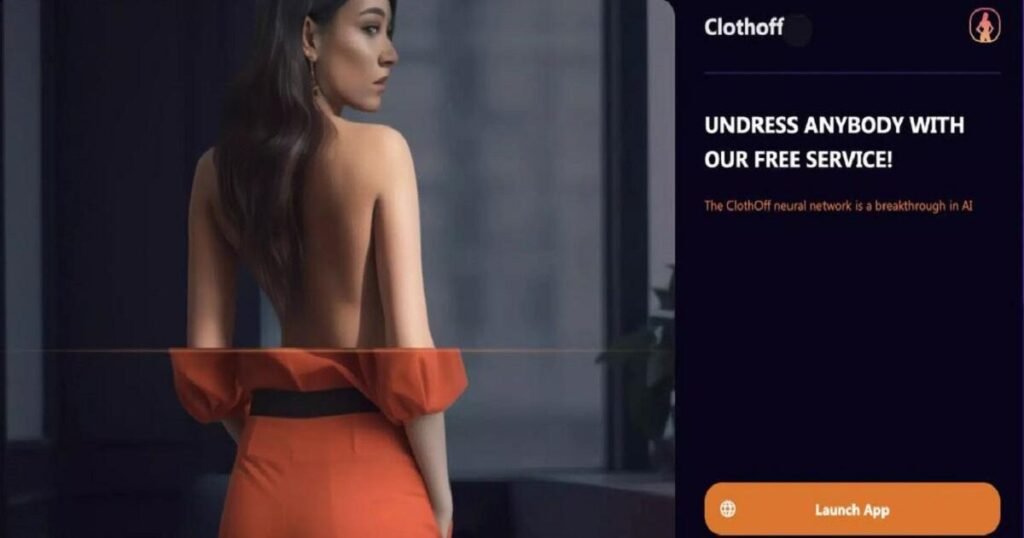

The emergence of deepfake technology has transformed the landscape of digital content creation, raising significant ethical concerns and personal implications. One such application, Clothoff, has come under fire for its role in producing non-consensual deepfake pornography. Recently, Clothoff announced plans to support victims of AI misuse, leading to a complex discussion regarding its legitimacy and intentions.

Amidst this controversy, the partnership between Clothoff and ASU Label has sparked further debate about accountability in the tech community. This article delves into Clothoff’s controversial history, its claims of support for AI victims, and the broader implications of deepfake technology.

What is Clothoff and why is it controversial?

Clothoff is a deepfake porn application that has gained notoriety for its production of non-consensual content. Users can create altered videos using the likeness of individuals without their consent, which raises grave ethical and legal issues. The controversy surrounding Clothoff primarily stems from its facilitation of harmful content, impacting the lives of many innocent victims.

The app has faced backlash from various advocacy groups who argue that it perpetuates a culture of violence against women and undermines personal autonomy. While Clothoff has positioned itself as a tool for creativity, many view it as a dangerous platform that exploits individuals for entertainment.

In light of its controversies, the app’s claims of supporting victims of AI misuse have been met with skepticism. The public questions whether Clothoff’s intentions are genuine or merely a facade to distract from the damage it has caused.

How does Clothoff claim to support AI victims?

Recently, Clothoff announced its intention to donate funds to support victims affected by AI-generated content. This announcement coincided with its partnership with ASU Label, an organization that claims to advocate for rights in the AI era. However, the veracity of these claims is under scrutiny.

Clothoff has stated that a portion of its profits will be allocated to assist victims of non-consensual deepfake pornography. This initiative aims to help those who have been harmed by the misuse of technology, signaling a potential shift in the app’s approach to its impact on society.

- Financial assistance for victims.

- Awareness campaigns about the risks of deepfake technology.

- Collaborations with advocacy groups for support.

Despite these claims, the lack of transparency surrounding ASU Label has led to further questions about the legitimacy of Clothoff’s efforts. Critics argue that without clear operational details, the initiative may not lead to tangible support for those in need.

What are the ethical concerns surrounding ASU Label?

The partnership between Clothoff and ASU Label raises significant ethical questions. ASU Label has been criticized for its lack of transparency, with concerns mounting over its registration and operational credibility. Many advocates worry that the organization may not be equipped to genuinely assist victims of AI misuse.

Transparency issues in AI-related organizations are critical, especially when it comes to the sensitive nature of deepfake content. Ethical considerations must guide the actions of developers and companies involved in AI. Stakeholders demand accountability from organizations like ASU Label to ensure that resources are allocated effectively to support those harmed.

Moreover, the ethical responsibility of AI developers in combating misuse cannot be understated. Companies must prioritize the protection of individual rights and dignity in their technology’s applications.

Is ASU Label a legitimate organization?

The legitimacy of ASU Label has been called into question due to its vague operational details. Critics argue that without proper verification and transparency, it is difficult to trust the organization’s mission to help victims of AI misuse. Additionally, experts in the field, including Kolina Koltai, have voiced concerns about the need for credible organizations in the realm of deepfake technology.

Without a clear framework and accountability measures, ASU Label’s effectiveness in supporting victims remains uncertain. This lack of clarity raises broader questions about the accountability of organizations that claim to advocate for rights in the rapidly evolving landscape of AI.

What impact do deepfake apps have on victims?

Deepfake technology can have devastating consequences for victims, often leading to emotional distress, reputational damage, and a loss of personal autonomy. Victims of non-consensual deepfake pornography face significant psychological challenges, as their likenesses are used against their will, exacerbating feelings of violation and helplessness.

The implications of such technology extend beyond individual victims, affecting societal perceptions of consent and privacy. As deepfake technology becomes more sophisticated, the potential for misuse increases, highlighting the urgent need for protective measures.

Moreover, research indicates that the general public is increasingly aware of the risks associated with deepfakes. This heightened awareness emphasizes the importance of educating users about the potential harms and ethical concerns surrounding deepfake applications.

How can we address online harms related to deepfakes?

Tackling online harms related to deepfakes requires a multifaceted approach. First and foremost, there is a need for greater regulation and oversight of deepfake technology and its applications. Governments and organizations must collaborate to establish legal frameworks that protect individuals from non-consensual content.

- Implement stricter regulations on deepfake technologies.

- Enhance public awareness campaigns about the risks of deepfakes.

- Encourage collaboration between tech companies and advocacy groups.

Furthermore, education plays a pivotal role in combating the misuse of deepfake technology. By informing users about potential dangers, society can create a more informed digital landscape where individuals are empowered to protect themselves against such harms.

Experts emphasize the importance of ethical considerations in the development and deployment of AI technologies. Developers must take responsibility for the consequences of their creations, ensuring that they do not contribute to online harms.

Questions related to the impact of Clothoff and AI misuse

What is Clothoff?

Clothoff is a deepfake porn app that allows users to create altered videos using people’s likenesses without their consent. This app has been associated with significant controversy due to its contribution to non-consensual deepfake pornography, a disturbing trend that has drawn widespread condemnation.

How does Clothoff help AI victims?

Clothoff claims to assist victims of AI misuse by donating a portion of its profits to support those affected by non-consensual deepfakes. However, the authenticity of these claims remains questionable given the lack of transparency surrounding its partnership with ASU Label.

What is ASU Label’s role?

ASU Label claims to advocate for the rights of individuals affected by AI misuse. Its partnership with Clothoff intends to enhance support for victims. However, concerns about its legitimacy and operational transparency raise doubts regarding its effectiveness in fulfilling this role.

Are there ethical concerns with Clothoff?

Yes, significant ethical concerns exist regarding Clothoff’s operations. The app’s facilitation of non-consensual deepfake content raises questions about the responsibilities of developers in ensuring their technology does not harm individuals. Furthermore, the ethical implications of its partnership with ASU Label are under scrutiny.

How do deepfakes impact victims?

Deepfakes can have profound psychological and social impacts on victims, often leading to trauma, reputational damage, and violations of privacy. The emotional toll can be severe as victims deal with the ramifications of having their likeness used inappropriately.

What measures are in place to prevent online harms?

Addressing online harms related to deepfakes involves regulatory measures, educational initiatives, and advocacy efforts. Stricter regulations on deepfake technology, public awareness campaigns, and collaborations between tech companies and advocacy groups are essential to mitigate risks associated with these technologies.