An analyst runs an aggressive SpiderFoot scan against a corporate domain. Minutes later, the target’s leadership starts searching for his identity on LinkedIn. The investigator was investigating. He was also being investigated.

This is not an edge case. It is the normal functioning of an ecosystem where every data collection action is potentially visible, where every query leaves a trace, and where the boundary between researcher and target is thinner than vendors admit in their marketing materials.

The global OSINT market now exceeds $29 billion — grown from $5.02 billion in 2018 at a 24.7% CAGR. Behind that growth sits a structural architecture that market reports consistently flatten. This analysis breaks it open.

What the Growth Numbers Don’t Show

The expansion of the OSINT market is not primarily a story about better tools. It is a story about industrialized threats forcing industrialized responses. Adversaries now routinely use publicly available data to orchestrate supply chain attacks, coordinate transnational cybercrime, and run geopolitical disinformation campaigns at scale. Platforms responded by moving from manual web scraping to automated correlation engines, NLP pipelines, and predictive machine learning.

The result: intelligence gathering has professionalized. What a skilled analyst could do manually in a week, a configured platform does in minutes. But professionalization also means stratification — between tools accessible to independent researchers and tools priced exclusively for Fortune 100 security operations.

Recorded Future charges small organizations $50,000–$100,000 annually. Mid-sized to enterprise deployments exceed $250,000–$500,000 per year. Babel Street and Intelligence X follow similar enterprise-only pricing logic. The consequence is a two-tier market: expensive integrated platforms for institutional actors, and open-source or low-cost tools for everyone else. Understanding which tier applies to a given investigation defines the entire operational workflow.

System Map: Where the Data Actually Lives

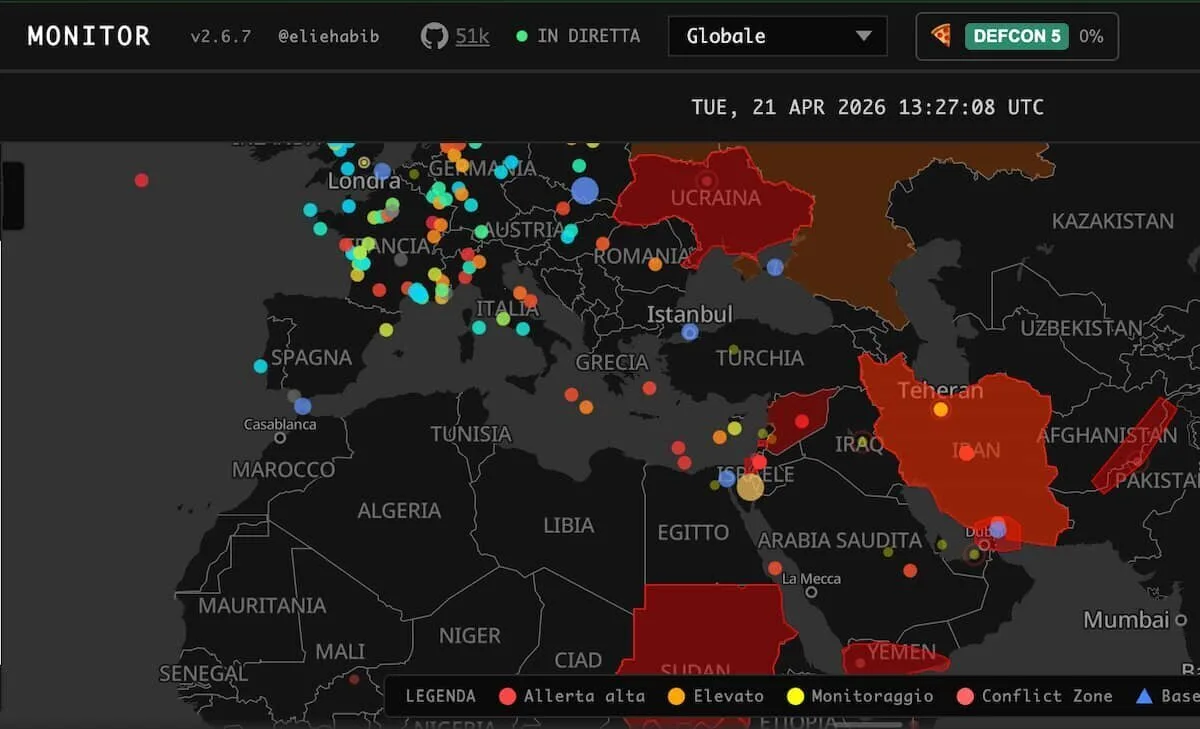

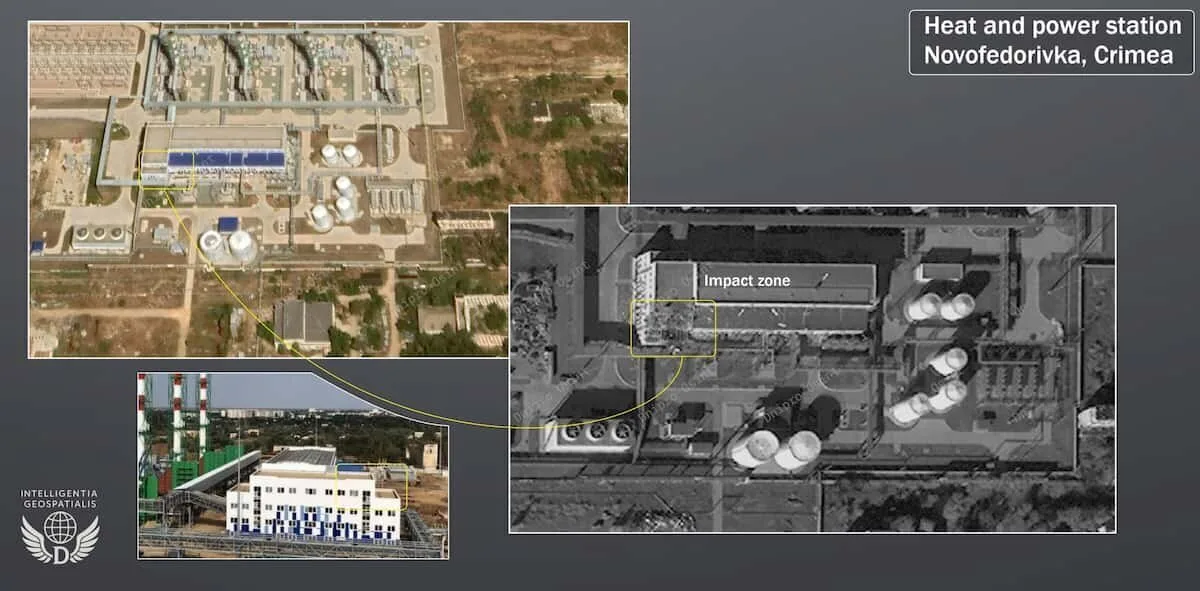

The 2026 OSINT landscape is structured around three primary domains. Human intelligence focuses on digital footprints, personnel profiling, and credential exposure. Technical intelligence maps network infrastructure, discovers exposed misconfigurations, and identifies structural vulnerabilities. Geospatial intelligence analyzes spatial data, localized telemetry, and regional risks.

Each domain has its specialist platforms — and each platform has a specific architectural logic that determines what it can and cannot find.

Shodan does not index web content. It crawls service banners, open ports, default credentials, and vulnerable software versions associated with exposed devices — from corporate web servers to industrial control systems to unpatched consumer webcams. Its database is a snapshot in time, not a live feed. Analysts have documented cases where expired SSL certificates and long-remediated open ports still appeared in results, creating diagnostic confusion when used without supplementary active scanning tools like Nmap.

Intelligence X operates on a fundamentally different logic: permanent archival. Unlike standard search engines that index the current state of a page, Intelligence X preserves data permanently — dark web posts, data leaks, I2P/Tor content — even after the original source has been deleted. Access requires precise selectors: email addresses, IPv6 CIDR blocks, Bitcoin addresses, UUIDs. Keyword searches don’t work here. If you don’t know what you’re looking for at a technical level, the platform returns nothing useful.

OSINT Industries inverts this entirely. Instead of archival depth, it provides real-time breadth: input a single selector — phone number, email, username — and the platform queries hundreds of live public sources simultaneously, deliberately avoiding static databases. Investigators have used it to identify predatory individuals on dating platforms and dismantle counterfeit pharmaceutical distribution networks by tracing operator digital footprints.

Maltego sits above all of these as a visualization and relationship-mapping layer. Its “Transforms” are executable scripts that query external data sources and return results as visual nodes on an investigation graph. The platform connects entities across 120+ data sources — IP addresses, domains, corporate registrations, human profiles — surfacing hidden network structures invisible inside raw datasets. Its recent expansion to Maltego One adds web-based access alongside the traditional desktop application.

SpiderFoot operates as an automated correlation engine. When a scan targeting a domain or IP initiates, results from one module automatically trigger subsequent modules — a cascading publisher/subscriber model across 200+ data sources. Version 4.0 added a YAML-configurable correlation engine that links collected data and flags potential vulnerabilities without manual intervention.

Operational Method: How an Investigation Actually Runs

Step 1 — Define the selector and the domain. Before touching any tool, establish whether the investigation targets a human entity (email, phone, username), a technical asset (domain, IP, ASN), or a geospatial entity (location data, regional network activity). The selector determines the platform stack.

Step 2 — Passive collection first. Query Shodan for infrastructure exposure. Use Intelligence X for historical data and breach records tied to the target’s identifiers. Run OSINT Industries for real-time digital footprint correlation. None of these queries broadcast investigative intent to the target — they are passive reads against indexed databases.

Step 3 — Map relationships with Maltego. Feed initial findings into Maltego. Run Transforms against domains, IPs, and any human-linked identifiers. The graphical output will surface secondary connections — linked registrations, shared hosting infrastructure, associated accounts — that don’t appear in raw data.

Step 4 — Automated deep scan (controlled). Deploy SpiderFoot only after establishing passive baselines. Selectively disable active modules — the ones that directly query or probe the target’s infrastructure — before initiating. Active modules are the operational security risk: they generate traffic visible to the target and to platforms like LinkedIn that track external reconnaissance patterns.

Step 5 — Verification through triangulation. No single platform output constitutes verified intelligence. Cross-check findings: if Shodan flags an exposed service, verify current status with Nmap. If OSINT Industries links a phone number to an account, corroborate through a second selector query. If Maltego surfaces a corporate registration connection, validate against primary registrar records.

Step 6 — SIEM/SOAR integration for continuous monitoring. At institutional scale, intelligence platforms don’t run as standalone tools — they feed into SIEM/SOAR architectures. A SIEM detects anomalous internal network traffic communicating with an unknown external IP. It triggers a SOAR playbook. The SOAR queries Recorded Future’s Intelligence Graph for risk scoring on that IP, or launches a targeted SpiderFoot scan against the associated domain. Enrichment happens automatically, without analyst intervention. Recorded Future integrates directly into Microsoft Sentinel via Logic Apps and specialized Playbooks, pulling curated Risk Lists — malicious IPs, command-and-control server communications, newly flagged URLs — directly into the SIEM’s threat indicator tables.

Critical Issues: What Breaks in Practice

API restriction is the primary operational friction in 2026. Following the mass harvesting of public data for LLM training, social networks aggressively locked down public endpoints — stringent rate limits, sophisticated CAPTCHAs, advanced bot detection. Bulk collection now frequently produces immediate access blocks, timeout errors, and permanent IP bans. Workarounds exist: headless browsers, rotating proxies, automation scripts. Each introduces legal exposure — fake accounts created to harvest restricted content represent direct terms-of-service violations and, in several jurisdictions, potential CFAA liability.

Data freshness is not guaranteed. Shodan’s database is a temporal snapshot. Intelligence X preserves historical data that may no longer reflect current reality. The gap between what a platform shows and what currently exists on a target’s infrastructure can be significant — and acting on stale data produces investigative errors.

Automated tools generate noise at scale. SpiderFoot scans without precise module targeting can return tens of thousands of data points. The analyst overhead required to sift and validate that output negates the efficiency gain. The same problem applies to enterprise threat feeds: Recorded Future users have documented the experience of expensive, aggregated feeds delivering historical or recycled indicators of compromise rather than active, targeted intrusions — intelligence that functions more as “polished RSS” than genuine threat visibility.

Alert fatigue is structural, not accidental. Even the most expensive platforms require rigorous internal integration and localized tuning before delivering meaningful signal. A platform configured generically will generate generic alerts. The investment required to tune a Recorded Future deployment to an organization’s specific network environment is substantial — and often not factored into the initial procurement decision.

Analytical Layer: Patterns and Contradictions

The dominant contradiction in the 2026 OSINT market is the gap between platform capability and operational utility. Tools are more powerful than ever. Simultaneously, the conditions under which they operate — API restrictions, bot detection, rate limits — are more hostile than ever. The market sells capability; practitioners buy friction.

A second structural tension: the legal premise underlying all OSINT activity is under active revision. The assumption that public data is freely usable is legally false under GDPR and CCPA. When platforms scrape, aggregate, and index PII — physical addresses, phone numbers, hidden metadata extracted via tools like FOCA — they become data processors subject to strict regulatory oversight. GDPR Article 17, the Right to be Forgotten, creates a direct collision with Intelligence X’s core value proposition of permanent archival. The platform processes takedown requests, but retains data when public interest — documented criminal activity, political corruption, statistical research — overrides the individual’s erasure right. That determination is made by the platform, not by a court.

The AI integration layer adds a third contradiction. AI systems can synthesize millions of individually innocuous public data points into highly sensitive private revelations — without ever accessing protected databases. This capability challenges conventional privacy law at a structural level: privacy frameworks were designed around data that is either public or private, not around the emergent sensitivity created by large-scale aggregation of public signals. The World Economic Forum’s Global Risks Report 2026 flags this directly, noting that as geoeconomic confrontation intensifies, AI will be weaponized to target supply chains and corporate networks through exactly this aggregation logic.

The most effective response emerging from advanced threat intelligence teams is localized knowledge graphs: scraping heterogeneous data from public sources in controlled, reproducible batches, normalizing that data, converting it into RDF format, mapping it to common ontologies, and loading it into local triplestores for offline SPARQL queries. This approach circumvents API dependency entirely, eliminates the risk of leaking investigative intent to external platforms, and preserves operational security over extended investigation timelines.

The OSINT market’s fundamental problem is not capability — it is epistemology. Platforms can collect more data faster than any human analyst can meaningfully process. The value of an intelligence operation no longer depends on access to data. It depends on the ability to filter, verify, and triangulate — to extract signal from a volume of noise that grows faster than the tools designed to manage it.

The analyst who ran that SpiderFoot scan and got burned understood this afterward. Active modules don’t just collect data. They emit it. Every tool that queries a target also announces the investigation to anyone watching the same infrastructure. Passive collection, controlled triangulation, localized storage, and human verification at each step — these are not workarounds. They are the method.

Join the community: Newsletter → https://projectosint.substack.com/ & Telegram → https://t.me/osintprojectgroup